Why Agentic AI Mandates Hardware-Software Co-Design?

Most AI systems today are still built with a familiar mindset.

Train a model, deploy it on the infrastructure and optimize performance over time. This approach works well when workloads are predictable and execution paths are clear.

But that’s no longer enough. Agentic AI systems change that equation because they operate differently.

These systems are not confined to single-pass inference. They operate through continuous loops of interpreting, reasoning, action, and adaptation, often coordinating across multiple tools, environments, and decision layers – at the edge, near-edge, and at cloud.

As a result, the challenge is no longer just about model accuracy or scale, it is about how efficiently the entire system executes to the goal defined.

This is where hardware-software co-design becomes critical. Not as an optimization layer added later, but as a foundational approach to building agentic systems that can perform reliably in real-world conditions.

The Core Problem: Agentic AI is Execution-Heavy, Not Just Compute-Heavy

The focus of traditional AI models was optimizing faster inference, increasing throughput, and improving model performance. Once the model is efficient, the system is largely considered optimized.

Agentic systems operate very differently. They are not just compute-heavy, they are execution-heavy.

A single task in an agentic system is rarely a one-step process. It involves multiple reasoning steps, repeated model invocations, continuous context updates, calls to external tools or systems, and coordination across multiple agents. What this creates is not a linear pipeline, but a continuous loop of decision-making and action.

Within this loop, three factors begin to dominate system behavior:

- Memory access for maintaining and retrieving context

- Data movement between components

- Decision latency, the time taken between successive steps

These are no longer secondary concerns; they directly influence how the system performs.

When any of these elements are inefficient, the impact is not isolated. Delays compound across iterations, causing the system to slow down progressively rather than linearly.

This is where traditional system design built for predictable, one-pass execution starts to break down.

Hybrid Architectures Are the Reality

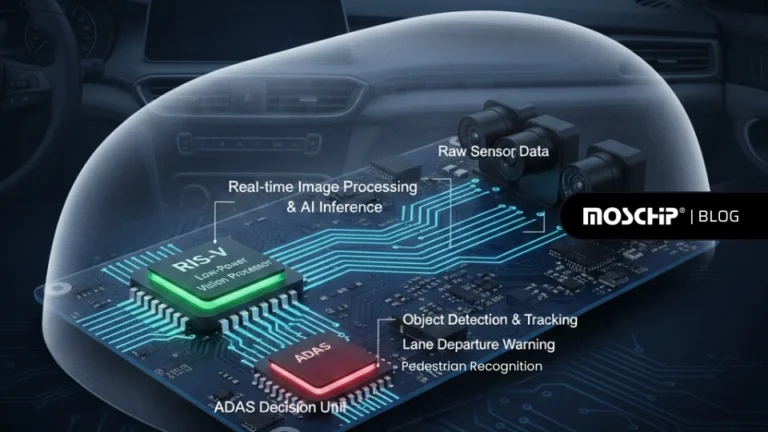

Agentic AI systems do not run in a single environment. They operate across a mix of edge, near-edge, and cloud systems.

This is not just an architectural choice. It is driven by real system needs. Latency-sensitive decisions must happen close to where data is generated, while more complex reasoning and model updates require higher compute capability in centralized environments.

At the same time, not all workloads can run everywhere efficiently. Running everything in the cloud increases latency and cost, while running everything at the edge limits model performance and capability.

This creates a hybrid architecture, where workloads are distributed based on latency requirements, cost constraints, model effectiveness, and available compute.

And this is exactly where things start to break when hardware and software are not designed together.

Where Systems Break Without Co-Design

When hardware and software are designed independently, the system cannot adapt to the execution demands of agentic AI. The problem is not just inefficiency; it is a mismatch between where computation needs to happen and what the hardware is built to support.

Agentic systems require decisions to be made at different points. If you have latency-sensitive operations, they must execute close to where data is generated, pushing compute toward the edge, while large-scale reasoning and training depend on centralized, high-performance cloud infrastructure.

Without co-design, this split breaks down.

Software may require inference at the edge, but if the hardware lacks dedicated acceleration or efficient memory access, execution becomes slow and inefficient, forcing tasks back to the cloud. This increases latency and introduces network dependency. At the same time, cloud systems, though provisioned for scale, remain inefficient for iterative, stateful workloads without alignment to execution patterns.

The result is a fragmented system where compute placement is driven by hardware limitations instead of actual system needs. Data moves more than it should, decision loops stretch across edge and cloud, and latency becomes unpredictable. As execution shifts between environments, it also becomes harder to maintain consistent context, leading to decisions based on outdated or incomplete information. As the system scales, these issues only get worse, increasing delays and coordination overhead.

Co-design fixes this by aligning how the system runs with what the hardware can support, so each part executes where it is most efficient without adding unnecessary overhead.

Why Hardware-Software Co-Design is Required

Hardware-software co-design solves a very practical problem. Agentic systems don’t run in a straight line. They continuously read context, make decisions, and act in loops.

Now imagine software is trying to do all of this, but the hardware is not built for it. Context takes longer to access, data keeps moving between components, and each step in the loop slows down. Over time, these small delays add up and affect the entire system.

This is where things begin to break. The system is not slow because the models are complex, but because the way the software runs does not match how the hardware works.

Co-design fixes this by bringing both together from the start. The software defines how the system needs to behave, like how often it reads context, where decisions are made, and what needs to run in real time. The hardware is then designed or chosen to support that behavior, so context is easier to access, data does not move unnecessarily, and compute is placed where it is actually needed.

As a result, the system runs more smoothly. Context is available when needed, delays are reduced, and decision loops become faster and more consistent.

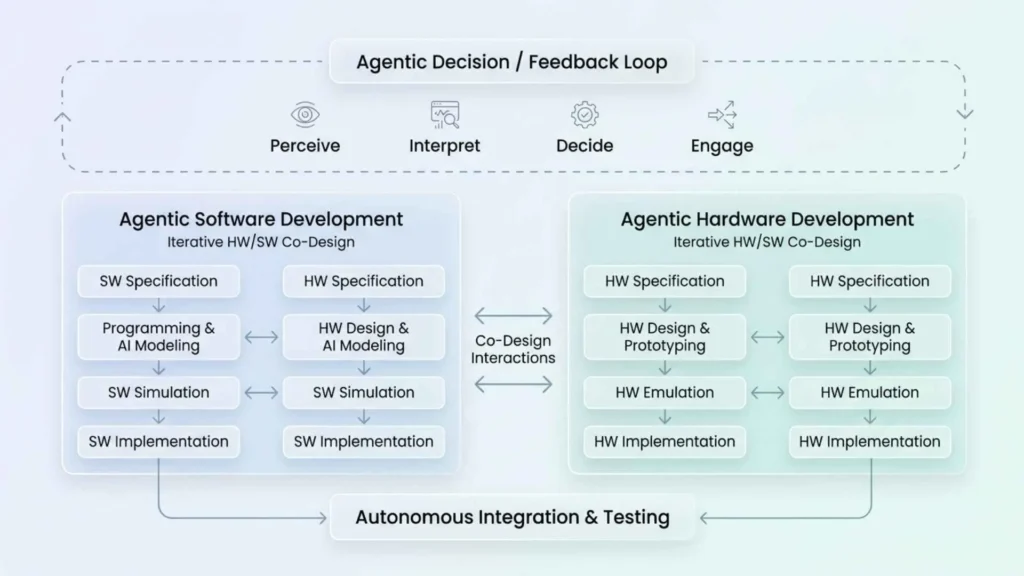

How Co-Design Works in Practice

The impact of co-design becomes clearer when you look at how hardware and software evolve together across the product development lifecycle.

Hardware and software are developed in parallel, with continuous alignment at each stage.

What You can Expect to Improve with Co-Design

The impact of co-design becomes clear when you look at what changes inside the execution loop.

1. Context Handling Becomes Efficient

In a co-designed system, memory is not treated as a generic layer. It is structured based on how context is actually used during execution.

The software defines what context needs to be read often, what gets updated at every step, and what can be stored for reuse. The hardware is then aligned to support this, through better memory organization, caching, and data placement.

Frequently used context is kept closer to compute, such as in faster or on-device memory. Updates are handled in a way that avoids repeated writes across layers, and data retrieval is designed to avoid unnecessary movement between memory levels.

This reduces one of the biggest hidden costs in agentic systems, which is context latency.

2. Data Movement is Reduced at the Source

In traditional systems, data movement is a side effect of disconnected layers. Co-design makes it a design decision. Software defines how data flows between models, agents, and tools, while hardware is structured to support that flow with minimal movement through compute placement, tighter coupling, and optimized interconnects.

Compute is positioned closer to where data is generated or consumed, reducing unnecessary transfers across CPU, GPU, and storage layers. At the same time, improved data locality minimizes repeated movement, and shared pathways reduce serialization and communication overhead.

The result is a system where data movement no longer dominates execution time, allowing faster progression through reasoning steps.

3. Decision Loops Become Tighter and More Predictable

Agentic systems rely on fast, iterative decision loops where each step depends on the outcome of the previous one. Without alignment, these loops become uneven. Some steps execute quickly while others stall due to delays in scheduling, memory access, or data transfer. Over multiple iterations, this variability compounds, making the system slow and unpredictable.

Co-design addresses this by aligning execution behavior with how resources are provisioned and scheduled. Instead of optimizing for throughput, the system is structured around reducing per-step latency. This means compute is made consistently available for sequential decision steps, memory access paths are stabilized to avoid unpredictable delays, and scheduling is tuned to prioritize continuity of execution rather than isolated task efficiency.

As a result, the decision loop stops behaving like a series of disconnected steps and starts functioning as a continuous flow. Each iteration executes within a tighter latency bound, reducing variance across steps and making the system more responsive and stable under real workloads.

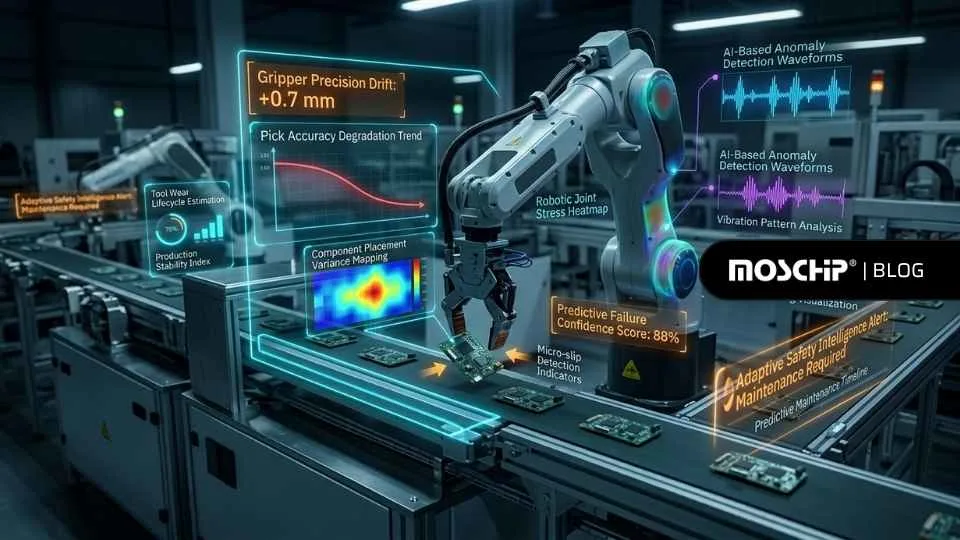

4. Multi-Agent Coordination Becomes Scalable

In multi-agent systems, agents need to work together by sharing information and making decisions at the same time. Without co-design, this becomes difficult because both hardware and software are not aligned.

From the software side, many agents try to read and update the same data, run models, and communicate with each other at once. From the hardware side, all of this activity goes through shared memory, limited data paths, and the same compute resources. This creates congestion. Some agents get delayed, some repeat work, and others act on outdated information because they are not getting updates fast enough.

As more agents are added, the problem gets worse. Delays increase, coordination becomes harder, and the system slows down even though more compute is available.

Co-design fixes this by making sure the way agents interact is supported by the hardware. Software defines how agents share data and communicate, and the hardware is set up to handle this efficiently, with better memory access, faster data exchange, and balanced use of compute resources.

As a result, agents can work in parallel without getting in each other’s way. They get the right information at the right time, and the system continues to perform well even as it scales.

Wrapping Up

Agentic AI systems do not fail because models are weak. They fail when the underlying system cannot sustain continuous, context-driven execution.

Hardware-software co-design closes this gap by aligning how agentic systems behave with how infrastructure actually operates. It ensures that decision loops remain efficient, context stays accessible, and coordination scales without introducing friction.

This is where product engineering approaches become critical. At MosChip Technologies, co-design is applied across the full stack bringing together edge, accelerated compute, and cloud to support real-world agentic workloads.

With platforms like AgenticSky, the focus shifts from running isolated models to enabling agentic systems faster.

Because in agentic AI, the real challenge is not intelligence. It is sustaining it. Talk to us for your next Agentic AI innovation.

-

View other Blogs

View other BlogsSmishad Thomas is a Technical Marketing Manager at MosChip. He has over 13 years of experience in technology marketing, branding, and content leadership. He has a keen interest in product engineering and loves developing convincing stories that translates technical innovations into clear, engaging messaging that resonates with business audiences