Addressing OT-IT Fragmentation in Industrial IoT

Many organizations across the world have implemented Industry 4.0; however, most of them have not seen complete success.

Despite strong investments and clear intent, execution often falls short. While there are several reasons for the failure of industrial 4.0 initiatives, a key reason is the fragmentation of Operational Technology (OT) and Information Technology (IT), which limits the true value of industrial IoT.

This fragmentation builds gradually on the shop floor. OT environments generate large volumes of machine data, but it is often inconsistent, highly vendor-specific, and difficult to standardize or reuse across systems.

These are foundational challenges that Industrial IoT deployments must overcome to deliver any real value.

At the same time, data is frequently siloed across standalone assets, production lines, and plants, limiting visibility beyond isolated applications.

Legacy systems further compound the challenge with long operational lifecycles. Replacing these assets is typically expensive, risky, and disruptive to operations.

Multi-vendor environments, incompatible protocols, stringent security requirements, and organizational risk aversion further restrict integration efforts. A shortage of skilled resources who can bridge both OT and IT domains adds additional complexity.

As a result, data remains fragmented and disconnected, preventing a reliable single source of truth and limiting Industry 4.0 outcomes, pointing to a deeper issue: OT fragmentation by design.

Why OT–IT Fragmentation Happens?

To really understand why fragmentation continues, we should look at how Operational Technology (OT) and Information Technology (IT) have developed over time. They were created for completely different objectives. OT systems are all about ensuring uptime, safety, and reliable control through tools like PLCs and SCADA, which makes them quite inflexible and hardware-centric.

In contrast, IT systems focus on data processing, scalability, and flexibility, making them more adaptable and software-driven. In essence, they were never meant to work in tandem.

The problem is further complicated by legacy systems that often rely on outdated equipment and proprietary protocols. Replacing these systems can be both costly and risky, which is why they tend to stick around, preventing integration with modern IT infrastructures like cloud computing and APIs as a core barrier to realizing Industrial IoT at scale.

Organizational silos create additional challenges. The operational technology (OT) and information technology (IT) teams often have different priorities, skill sets, and key performance indicators (KPIs), which may lead to a lack of alignment. Security issues and a tendency to avoid risk further hinder integration, particularly in settings where any downtime is not an option.

Moreover, the real-time operational needs of OT, such as rapid control actions, alarm handling, safety responses, and process stability, often conflict with IT’s tolerance for latency.

When you factor in the lack of standardization, changing architectures, and an uncertain return on investment (ROI), it results in a disjointed, patchwork system that continues to slow down the progress of Industry 4.0.

To move beyond these limitations, organizations must shift from fragmented systems to a unified, scalable industrial intelligence framework.

The Real Reason for Industry 4.0 Fails: Misaligned OT–IT Data

The core issue is that most approaches prioritize connectivity over data alignment. These are fundamentally different objectives.

Why Raw Data Alone Doesn’t Deliver Value

The initial instinct in many deployments is to connect machines and stream data into a central system. While this creates the illusion of progress, simply moving raw data through a pipeline does not equate to usable data.

Without normalization, which means making machine data look and behave the same before it moves upstream, using consistent names, formats, units, and timing. Without it, different machines can report the same thing in completely different ways, making the data harder to use.

At the source, the data arriving downstream is inconsistent, unstructured, and too complex for reliable analysis. Teams then spend more time cleaning data than extracting valuable insights.

Why Cloud-First Doesn’t Deliver Real-Time Results

Cloud platforms are commonly positioned as central aggregation layers, but latency is the core issue they cannot overcome. Sending data to the cloud and waiting for it to be processed introduces delays that are incompatible with real‑time operational needs.

On the shop floor, decisions often need to happen in milliseconds, not seconds. The round‑trip latency of cloud processing network transfer, ingestion, computation, and response is more than a technical drawback.

It is a fundamental operational constraint that limits how quickly systems can react, adjust, or intervene.

When responsiveness matters, relying solely on cloud‑based processing puts real‑time decision‑making out of reach.

Why Data Silos Still Exist in Industry 4.0

OEE tracking, maintenance monitoring, and quality management are commonly developed as independent initiatives, each with its own data model, pipeline, and team. While each delivers individual value, their inherent isolation prevents cross-functional insight correlation. The same asset may be represented differently across these disconnected systems.

The result is a technology stack where systems are connected, but the underlying data is misaligned. Without data alignment, solutions struggle to scale. Each new plant added to the program inherits the same structural problems, regardless of location.

Therefore, solving fragmentation requires more than just better tools; it demands a proper data foundation built from the ground up.

From OT–IT Fragmentation to Scalable Industrial Intelligence

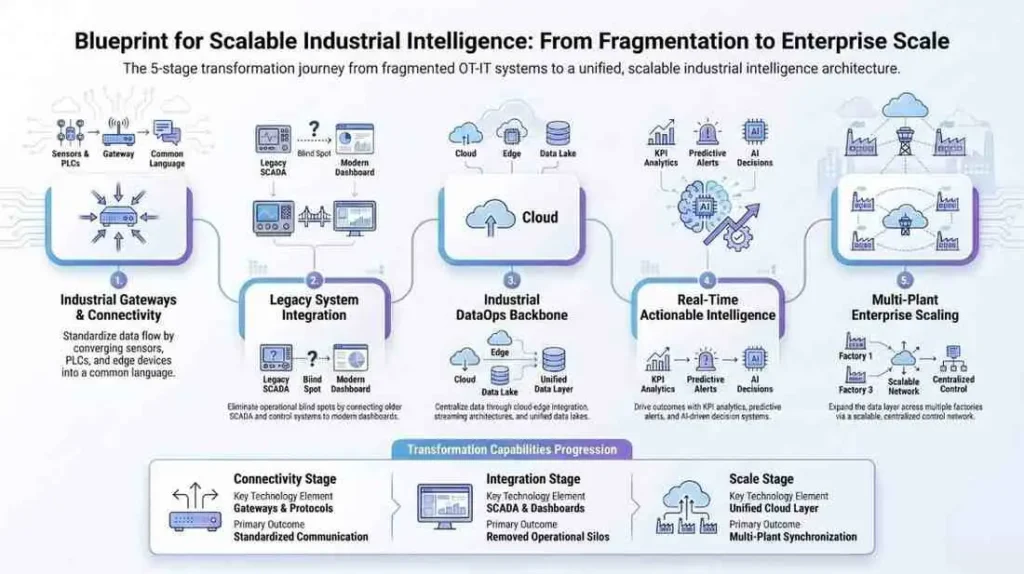

Addressing the fragmentation between OT and IT goes beyond mere point integrations or small adjustments. It requires a well-structured strategy that systematically integrates disconnected systems into a unified and scalable data and intelligence framework.

This process typically unfolds in five essential stages.

Industrial Gateways: Establishing a Common Data Language

The journey starts at the edge, where fragmentation is most evident. In industrial settings, a variety of protocols like Modbus, OPC-UA, PROFINET, and proprietary interfaces coexist, but they were never meant to work together.

Industrial IoT deployments depend on IIoT gateways to convert and standardize these different protocols into a uniform data format right at the source. This guarantees that all downstream systems receive organized, standardized data, which removes the need for repeated data cleaning throughout the pipeline.

By addressing inconsistencies early on, organizations can establish a single, dependable entry point for machine data, no matter the type of equipment or protocol involved.

Legacy Integration: Eliminating Operational Blind Spots

One of the key obstacles to integration is the outdated equipment that lacks native connectivity. These machines are typically so integrated into production processes that replacing them is not a viable option.

Retrofitting presents a sensible way forward. By enhancing legacy systems with sensors, smart gateways, and vision-based data extraction when needed, organizations can integrate older assets into the digital framework. This strategy creates a digital view of once unclear operations, ensuring that vital data is captured and not missed.

A representative use case is the digitization of analog pressure gauge meters using AI-driven vision systems. In environments where gauges cannot be modified due to operational constraints, industrial-grade cameras are retrofitted to capture continuous visual readings.

Edge-deployed computer vision models interpret dial position, scale markings, and needle angles to extract precise numeric values in real time. These readings are converted into digital signals and integrated with alarm management and maintenance systems alongside additional sensor data such as temperature and vibration.

By enabling local, low-latency processing with high accuracy, this approach restores visibility into critical operations, supports predictive maintenance, and extends the usable life of legacy equipment without invasive changes.

Typical stages for a unified & scalable industrial framework

Industrial DataOps: Building a Unified Data Backbone

Once data is consistently captured from various assets, the focus turns to making it usable on a larger scale. Industrial DataOps functions as the connective layer that transforms raw machine signals into a structured, contextualized, and always accessible data foundation.

This process involves defining asset hierarchies, standardizing tags so similar measurements are labelled consistently across machines, enriching data with meaningful metadata such as asset identity, location, units, and operational context, and organizing it within a cohesive namespace that is intuitive for both operational and enterprise contexts.

Continuous data pipelines then connect edge systems directly to enterprise platforms like MES, SCADA, and ERP.

Instead of maintaining separate OT and IT environments with manual data exchanges, organizations create a shared, reliable backbone. This approach eliminates the need to rebuild integrations for every new use case, allowing applications and analytics to scale on a consistent data layer.

Real-Time Intelligence: Driving Measurable Outcomes

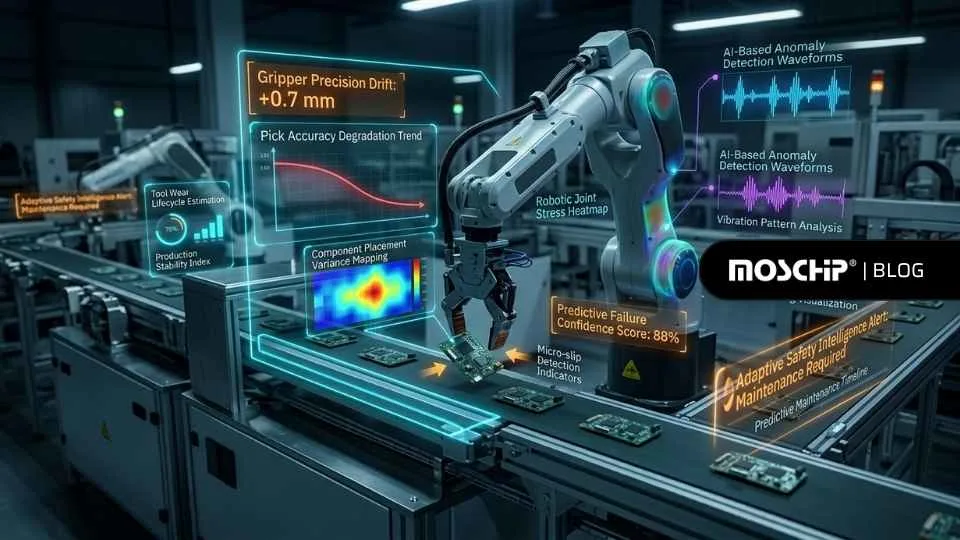

Once a strong data foundation is in place, the focus transitions from infrastructure to the outcomes achieved. Metrics such as Overall Equipment Effectiveness (OEE) evolve from static reports into tools that provide real-time operational insights.

Continuous monitoring of machine states, automatic classification of downtime events, and tracing production losses back to their root causes as they happen become feasible. This proactive capability enables production teams to act swiftly, improving throughput and minimizing inefficiencies during the same operational window.

At this stage, the integration efforts start to translate into real business impacts, faster decision-making, enhanced asset utilization, and measurable gains in productivity.

Scaling the Data Layer Across Plants

While having these capabilities in a single plant is a notable achievement, the real difficulty arises when trying to scale them across multiple locations. Each facility introduces its own variability in terms of machines, protocols, and levels of digital maturity, which can complicate expansion and lead to a series of custom integration challenges.

To address this, standardization needs to be built into the foundation of operations. This includes establishing consistent data models, creating reusable pipelines, and implementing a coordinated edge-to-cloud architecture that can adapt to the unique needs of each plant without requiring a complete redesign.

This method ensures that every new deployment can build on previous implementations instead of starting from scratch, which reduces costs, accelerates timelines, and maintains momentum throughout the organization.

Enabling This at Scale: MosChip + Litmus

Solving the OT–IT fragmentation at scale requires a foundation that is not just connected, but standardized, repeatable, and built for the real storage floor. The MosChip + Litmus method structures the IIoT as a progressive industrial statistical stack, moving from raw OT indicators to scalable intelligence.

Litmus Edge forms the manufacturing-grade data foundation by connecting modern and legacy OT assets through native industrial protocol support. It normalizes and contextualizes data at the edge, supports local analytics and event processing, and enables centralized management across plants, ensuring consistent, AI-ready data flows into enterprise and cloud systems.

MosChip builds on this with deep engineering and integration expertise. From machine onboarding and firmware optimization to OT‐IT integration and deployment, MosChip ensures that the platform works reliably in real business environments while enabling plant-specific AI use cases.

On top of this foundation, MosChip’s DigitalSky GenAIoT suite acts as an acceleration layer. With pre-built, field-tested reusable AI, edge AI, and GenAI models, which are easily edge-enabled, it reduces reinvention and speeds up deployment. They have capabilities such as predictive models, operator copilots, and real-time edge intelligence that transform standardized data into actionable outcomes.

Together, MosChip and Litmus allow for rapid onboarding, field‐tested connectivity, normalized records, and GenAI‐ready operations, developing an iterative course from single‐plant success to multi‐grid page commercial transformation, without rebuilding the foundation each time.

MosChip combines deep digital engineering with Industrial IoT to build scalable, cloud-native, and edge-enabled solutions. From IIoT gateways and legacy system modernization to DataOps, AI/ML, and secure OT-IT integration, it delivers intelligent, real-time, and production-ready platforms tailored for industrial environments and connected ecosystems.

To know more about MosChip’s capabilities, drop us a line, and our team will get back to you.

-

View other Blogs

View other BlogsDarshil is a Marketing professional at MosChip creating impactful techno-commercial writeups and conducting extensive market research to promote businesses on various platforms. He has been a passionate marketer for more than four years and is constantly looking for new endeavors to take on. When He’s not working, Darshil can be found reading and playing guitar.