A PoV on Engineering AI Evaluation: From Evaluation to Security

As AI integrates across business operations today, companies are shifting from experimental pilots to full-scale deployments. The evaluation needs to become a more rigorous, engineering-focused process.

Unlike traditional testing methods that are straightforward, AI Engineering systems, whether they are large language models or autonomous agents, function based on probabilistic principles. This requires complex validation workflows. Today’s product testing methods incorporate automated metric checks, “LLM-as-a-judge” systems, and real-time security measures to guarantee accuracy, resilience, and compliance.

DeepEval, RAGAs, and G-Eval are tools that facilitate detailed performance assessments, while Purple Llama components shall protect from prompt injections and insecure code. By integrating these practices into CI/CD pipelines, organizations can effectively monitor for model drift, bias, and security vulnerabilities, transforming evaluation from a one-time audit into a continuous governance process.

This organized approach not only fosters confidence and agility but also guarantees that AI solutions remain robust and scalable in ever-changing enterprise environments. Ultimately, it’s essential to consider AI evaluation as a core part of engineering to deploy AI systems that are secure, reliable, and ready for the future.

In this article, Toral Mevada, Manager of Automation and QA, shares her thoughts on how structured AI evaluation workflows can revolutionize quality engineering. She points out the critical role of automation, security practices, and compliance frameworks in achieving AI deployments that are dependable, scalable, and resilient.

Q1: What tools and frameworks can be used to evaluate AI Solutions?

A1: Evaluating AI goes beyond simply determining if the model functions correctly; it also involves ensuring that it is accurate, safe. Achieving this requires the appropriate tools and framework.

For advanced AI systems that involve LLM models and Retrieval-Augmented Generation (RAG) models, DeepEval and RAGAs are industry favourites. They provide specialized metrics such as reliability, answer relevance, and contextual recall. Additionally, DeepEval integrates with the Confident AI dashboard, allowing teams to track performance trends and regressions in one place.

If you’re tackling traditional NLP tasks such as translation or classification, Hugging Face Evaluate is an excellent option. The evaluation process uses benchmarks such as BLEU, ROUGE, and METEOR. However, when these standard metrics fall short, particularly in evaluating tone or brand voice, G-Eval becomes incredibly useful. It allows for the creation of custom metrics and utilizes LLMs to assess nuanced elements like Knowledge Retention.

Security is equally crucial. This is where Purple Llama tools really stand out. Tools like Llama Guard, Prompt Guard, and Code Shield are built to guard against prompt injections, jailbreaks, and insecure code. Additionally, we incorporate security measures into our evaluation scripts to ensure that safety is prioritized from the start.

At the core, architecture is important. We utilize a Swappable Judge Architecture to enhance flexibility:

- Local Inference: We run open-weight judge models like Llama 3 or Mistral through Ollama, which allows us to prioritize privacy and manage costs.

- Cloud Inference: For quick evaluations, we turn to Groq, especially when scaling to thousands of test cases in CI/CD pipelines.

This strategy ensures that your AI evaluation process is solid, secure, and equipped for enterprise-level deployment.

Q2: What workflow can be adopted for evaluation?

A2: Evaluate a particular AI Engineering approach’s integration with its overall architecture/solution and integrate it into how you do business. Let’s outline a detailed framework for evaluating the effectiveness (security, performance, and accuracy) of your AI solution.

- Define Your AI System and Task

The first step in evaluating the AI system is to identify the kind of AI it is (large language models (LLMs), Retrieval-augmented Generator (RAG), as well as its primary function within the AI system, i.e., chatbot, code generator, translator, etc. Determining what type of AI model, you are evaluating and the main purpose of the model will help inform how to evaluate the model and help you build a dataset that contains valid examples of both high-quality input(s) and “ground truth” output(s) for your application.

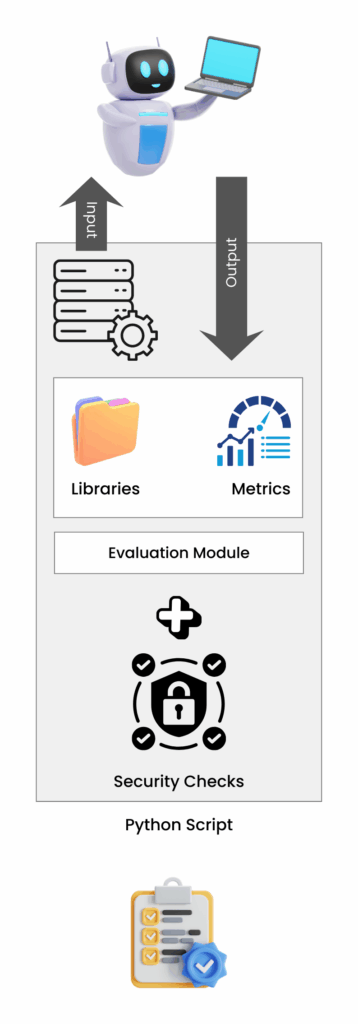

- Custom Scripts to Automate Test Automation

With the power of Python, you can create automated test scripts that will allow you to perform automated tests on your model using data from your training set. To check your AI model, you need to send test data along with AI output to the “Judge Model”. It will then process the data and send the results back to your own script. In that script, the Judge Model will look at the results using your specific evaluation rules. This whole process helps you confirm if your model is good enough for your standards.

- Multi-turn Conversation Workflow

For chatbots or conversational AIs, the workflow must be “stateful”. This means that during your testing, you are simulating the interactions of an actual user and keeping track of the “turns” that occur within the conversation. For example, as an automated test continues with the “turns” that occur in the conversation, the test can evaluate both Conversation Completeness and Turn Relevancy, demonstrating that the AI is aware of and can keep track of context and maintain coherence throughout the entire conversation.

QA Evaluation Workflow for AI_MosChip

4.Use Real-time Checks to Increase Security

Once the model returns an answer, complete a Security Check Step on it. Tools like Purple Llama Firewall, such as Prompt Guard and Code Shield, can help identify any potential issues related to sensitive data leakage and insecure code prior to the user’s receipt of the model’s response.

5.Create Validation Reports That Can Be Used

The last step of this process is to create validation reports that will compile and present the results from all instances of testing conducted for all models. These validation reports offer a simple pass/fail rating system based on the user-defined thresholds, like a conventional Software Unit Test, and enable users to determine a model’s respective strengths and weaknesses and areas in need of improvement.

Why This Workflow Functions

The combination of maintaining conversation context, using automated testing, and using automated security checks establishes an efficient, repeatable, reliable evaluation method for the models you create and enhances user confidence and trust with your models and overall performance of your models.

Q3: How can automating Metrics Checks and Security checks assist in QA?

A3: Automating your metrics and security checks is a total game-changer. It basically turns a slow, manual audit into a continuous safety net that runs in the background. Instead of crossing your fingers and hoping for the best, you’re building a system that catches problems before they ever reach your users.

Here is how that helps the evaluation process:

- It catches “Model Drift” instantly

It catches “Model Drift” instantly. When you make even a small change to an AI, like changing just one prompt or adjusting a tiny setting called a hyperparameter, it can have a big effect on how the AI acts. By putting measurements like “Toxicity,” “Bias,” and how well the AI completes its “Tasks” right into your regular work process (your CI/CD pipeline), you’re basically setting up automatic checkpoints. If a change makes the AI less accurate or more biased, the system stops that change from going out to users immediately. Think of it as having a quality control team working around the clock that never needs a break.

- It creates a “Defence-in-Depth” security layer

We bring in a full set of security tools, a whole “defence-in-depth” system. This includes the Purple Llama security suite with Prompt Guard and Code Shield built right in. We also use Regex-based scanners alongside those.

What all these scanners do is check for safety during the evaluation step. That way, we make sure our model isn’t just clever, but truly safe from issues like prompt injections or accidental sensitive data leaks.

- It makes compliance way less painful

It makes compliance a lot easier. If you want certifications like ISO 42001 (for AI management) or ISO 42005 (for impact assessments), you need to show that you’re keeping an eye on your AI. Automation helps a lot by creating a “continuous audit trail.” Every time the system checks something, it records the results.

Think about how easy it would be to find all the info you need when you’re audited. You’ll have a very clear, technical trail (documentation) that shows every version of your model was previously vetted for safety and performance. This solves two problems at once: How to quickly convert your AI into something other than a “black box” and how to turn your AI into a trustworthy, governed asset. Your stakeholders will feel confident they can rely on you and the data your AI generates.

Q4: What additional points to consider while evaluating Agentic AI solutions?

A4: Agentic AI systems are way more complicated because they are autonomous.

Think about a typical AI Engineering system (like a RAG system), it just follows a straightforward, step-by-step process. But here’s the thing: an agentic AI model isn’t like that. It makes its own choices, figures out which tools it needs to use, and even thinks through problems all on its own.

That means you can’t just glance at the result and say, ‘Okay, that’s done.’ You need to look at all the little decisions and the entire thought process it went through along the way to make sure it’s heading in the right direction.

This brings us to a crucial concept: Reasoning Traceability. We basically track the agent’s internal thought process to see if it’s moving in the right direction. Tools like DeepEval are super helpful here. To fix a complex agent that keeps getting stuck in a loop, you need a way to track exactly what’s happening. Using specific metrics for traceability is the best way to do that.

Some important checks include:

- Plan Quality & Adherence: We should determine if the agent formulated a good plan initially. It’s also vital to check if they followed through with that plan all the way to completion.

- Tool & Argument Correctness: Is the agent using the right tools and using them properly? Does it have all the information and settings it needs to finish the job correctly?

- Step Efficiency & Task Completion: Is the agent reaching its goal efficiently without wasting time or computer power?

We also check something called “Internal Agent Integrity,” which is super important, especially when a few agents are working together. We use G-Eval and add our custom rules to review how the agents reason things out by looking into their logs and how they pass tasks back and forth.

For example, if an agent gets stuck in an infinite loop or decides to take a risky shortcut, our evaluation system flags that immediately, even if the final answer ends up looking correct.

This whole approach is how we make sure our AI systems aren’t just accurate; they’re also safe, efficient, and completely ready for professional business use.

Q5: Some points from my experience of evaluating AI solutions.

A5: Based on my experience running countless AI evaluation cycles, I’ve learned that a model is only as good as the number of tests you throw at it. If you have a vision for moving away from demo-ware to producing a production-ready product, consider these three critical points as you navigate your journey:

- Your “Golden Dataset” is Everything

I’ve found that the most common failure point isn’t the model itself, but a weak Golden Dataset. If your test data doesn’t cover “edge cases”, like ambiguous queries or prompts that are completely off-topic, your model may appear perfect in a lab setting but will break down when real users engage with it. The most important thing you can do for your AI workflow is to create a diverse and representative dataset.

- Dealing with AI Judges the Model

It helps to remember that the “judges” we use are large language models (LLMs). Because of this, they can be a bit inconsistent or “non-deterministic”, their scores might change slightly each time you run them.

To smooth out these little variations, here are a few things I suggest:

- Use clear cut-offs: For example, only accept a score if it is above 0.80.

- Evaluate in groups: Running evaluations in batches helps to average out the scores and makes the results more stable overall.

- Be flexible in where to use Judge Model: I like to use Ollama to quickly run small “mini evaluations” right on my own computer for free. Once I am sure the logic is working correctly, I move it to a super-fast Groq system to check thousands of cases in just a few seconds.

- Treat Adherence and Knowledge Retention as “Unit Tests.”

In a corporate environment, it’s important to emphasize Role Adherence and Knowledge Retention. If an AI loses track of its persona or starts mixing up facts from just a few sentences back, it becomes a risk rather than a benefit.

Instead of merely “observing” these issues, approach them like software unit tests. By establishing clear pass/fail criteria for instructions and facts, you transition from “guessing” whether your AI is functioning correctly to creating a predictable, reliable, and secure system.

In summary, we offer a clear pathway for organizations to transition from AI prototypes to secure, production-ready solutions. Our method involves using comprehensive evaluation frameworks like DeepEval, RAGAs, and tailored G-Eval metrics. We design adaptable architectures with swappable Ollama/Groq judge systems and implement Purple Llama security guardrails to achieve compliance with ISO 42001/42005. Moreover, we specialize in validating Agentic AI to ensure tool accuracy and reasoning integrity, guaranteeing that your AI is trustworthy, scalable, and equipped for the future.

To know more about MosChip’s QA capabilities, drop us a line, and our team will get back to you.

Author

-

Toral is a manager at MosChip and has total of 12+ years of experience in quality engineering of Embedded Systems and DSP software platforms. In her career, she has worked on numerous QA and Automation projects, test framework development, and DevOps projects. She is passionate about achieving optimum process automation and developing productivity improvement tools. While not working she likes to travel and read.