Building a vision-based self-checkout retail solution

Checkout counters have evolved from mechanical cash registers to barcode scanners and then to self-service kiosks. Each stage reduced human involvement and increased transaction speed. Consumers now expect contactless and efficient shopping, especially in high-footfall environments where time and convenience define satisfaction.

According to Future Market Insights, the global self-checkout systems market is projected to reach around USD 3.9 billion by 2025 and grow at a CAGR of nearly 10.4% through 2035, ultimately approaching USD 10.5 billion. This strong growth reflects how intelligent automation is becoming a standard across retail.

Retail automation has matured to the point where physical interaction with the checkout interface can be minimized. The next logical leap is visual recognition, systems that can identify products by sight and complete billing without any manual scan. This is where vision-based self-checkout becomes the most promising step forward in autonomous retail.

The concept behind vision-based checkout

A vision-based self-checkout system recognizes items visually using computer vision and artificial intelligence. It removes the dependency on barcodes or RFID tags. Cameras observe the products on the counter, and inference models determine what each item is, linking it to the store’s product database for billing.

A vision-based self-checkout setup typically combines several technical layers that work in sync. Imaging units, such as cameras or optical sensors, capture different views of the products placed on the counter. These visual inputs are processed by edge computing hardware, where embedded processors run AI models locally for faster decision-making. The inference models, built using deep learning techniques, handle object detection and classification to identify each product from the captured images. Once recognized, the system matches these items with entries in a database, which may reside locally or in the cloud, linking each detected object to its corresponding stock-keeping unit (SKU).

Together, these components create an integrated ecosystem capable of perceiving, identifying, and completing purchases automatically.

Building blocks of the system

Computer vision and Deep learning: Modern object recognition relies on convolutional and transformer-based networks capable of differentiating between thousands of product types. Frameworks like YOLO or Faster R-CNN enable real-time classification and segmentation. Each frame captured by the camera is analyzed to locate product boundaries, interpret textures, and verify labels printed on packaging. All of these can be orchestrated through advanced device software practices that ensure reliable performance on such platforms.

Sensor fusion: A single camera often cannot ensure full accuracy. Many deployments combine RGB cameras with depth sensors and weight scales. The combined data improves recognition confidence, especially when products overlap or partially obscure one another.

Edge AI processing: Inference happens locally on the embedded system rather than in the cloud. This keeps latency minimal and ensures stable performance even when connectivity fluctuates. Edge computing also enhances privacy, as sensitive images need not leave the local network.

Data pipeline: The entire process follows a defined chain where it captures images, inference, item classification, SKU retrieval, and billing integration. Every stage contributes to the precision and reliability of the final transaction.

Hardware architecture of vision-based self-checkout

The hardware stack for a visual checkout system combines optical, computational, and structural design.

High-resolution cameras with stable lighting are essential to maintain consistent image quality. Diffused LED panels or controlled lighting enclosures help reduce shadows and reflections. Compute modules, often based on platforms that handle the AI workload from the core – hardware and systems behind vision-based self-checkout designs.

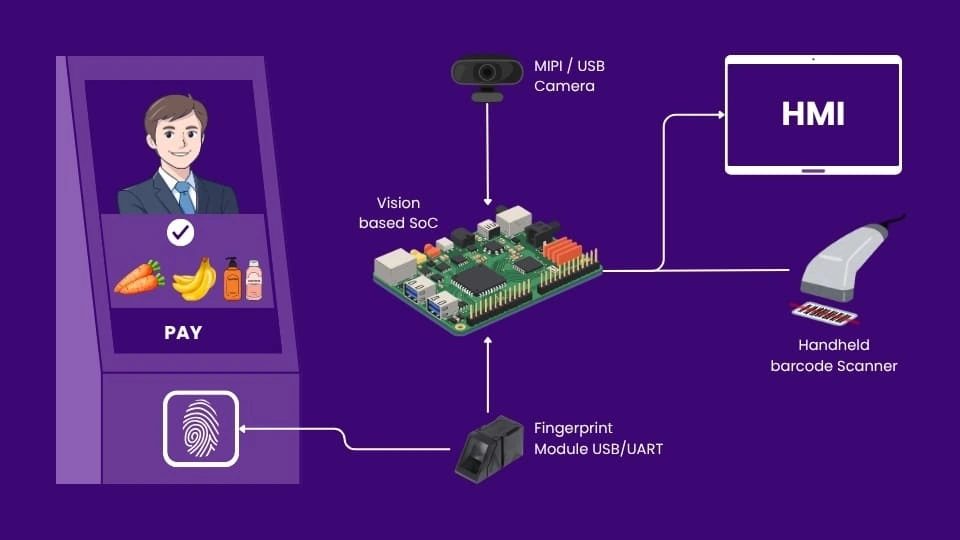

Connectivity interfaces like MIPI CSI, USB, or Ethernet link the imaging units with the processing board. Each connection must sustain high data throughput without overheating or frame drops.

Hardware engineers face real challenges in balancing power efficiency and performance. Thermal management in compact kiosks requires careful enclosure design. Form factor constraints must also allow integration with existing point-of-sale terminals and payment modules. Every mechanical and electronic decision directly affects real-world reliability.

Vision-based self-checkout system overview

Software stack and data flow

The intelligence of the system depends on its software structure. AI models are typically deployed using frameworks such as TensorFlow Lite, PyTorch, or ONNX Runtime. To run efficiently on embedded hardware, models are compressed, reducing computation without sacrificing much accuracy.

A middleware layer connects the vision engine to the HMI. APIs exchange recognition events, SKU data, and transaction updates. This creates a continuous communication loop between perception and commerce logic.

The cloud remains useful for analytics, dataset updates, and retraining. New product packaging or seasonal items can be added through controlled synchronization without halting local operation.

Benefits of a vision-based self-checkout kiosk

- Vision-based self-checkout changes the economics of retail operations. It removes dependence on manual scanning and reduces transaction time. Staff can be redeployed for customer engagement rather than routine billing tasks.

- Fraud detection also improves. Systems can cross-check weight, motion, and image data to flag suspicious behavior automatically. The same vision feed that identifies products can detect missed items or incorrect placements.

- From the consumer’s perspective, the process feels faster and more natural. The system’s ability to collect operational data also helps stores analyze item flow and optimize shelf placement. Scalable edge deployments make it suitable for unmanned kiosks and 24/7 stores where human supervision is limited.

Future of self-checkout systems

As embedded processors continue to advance, the boundary between edge and cloud will keep shifting. Future self-checkout systems will likely merge visual recognition with behavioral analysis; they will track not just items but motion, dwell time, and interaction sequences.

Generative approaches for synthetic data are also gaining ground. Engineers can now simulate thousands of product variations for model training without physically capturing each one. This accelerates development and keeps accuracy stable even with frequent SKU updates.

Interfacing with digital payment platforms and inventory systems will further close the loop, creating self-managed retail units that run continuously with minimal supervision.

Ultimately, vision-based self-checkout demonstrates how intelligent perception and embedded design can converge to redefine the retail experience. The focus is now less on feasibility and more on scalable execution on how efficiently, securely, and economically these systems can be deployed across different retail formats.

MosChip supports OEMs and retail solutions providers in accelerating the shift from conventional systems to next-gen retail solutions embedded with agentic traits. MosChip’s ProductXcelerate blueprint – Retail Kiosk is built on the Qualcomm® 8250CS platform with vision capability for product recognition, biometric authentication, seamless payment checkout, and improving user experience. The solution is completely hardware agnostic and helps OEMs to deliver solutions with agency rapidly.

The blueprint integrates MosChip’s DigitalSky GenAIoT and AgenticSky VisionCore + HMICore frameworks to enable autonomous, transparent, and context-aware checkout experiences. These delivers agentic AI traits such as goal orientation, adaptiveness, and autonomy, allowing kiosks to perceive, interpret, decide, and engage and act autonomously to deliver exceptional user experiences across several industry use-cases.

With deep expertise in product engineering services, from platform design to smart digital solutions, GenAI and Agentic AI, MosChip can enable faster prototyping and scalable deployment of intelligent solutions across domains that bring visual perception, automation, and intelligence in a single edge unit.

Author

-

Ambuj is a Marketing professional at MosChip creating impactful techno-commercial writeups and conducting extensive market research to promote businesses on various platforms. He has been a passionate marketer for more than three years and is constantly looking for new endeavours to take on. When He’s not working, Ambuj can be found riding his bike or exploring new destinations.