Why is Unified Hardware becoming a core strategy for Edge AI platforms?

The shift towards Edge AI is moving faster than the hardware it runs on. For engineering leaders, the challenge has shifted from “Can we run this model?” to “Can we ship this product without three board re-spins and a thermal meltdown?”

These failures rarely appear in isolation. They surface late, when hardware, firmware, and workloads finally come together. Power limits collide with thermal constraints. Firmware timing slips under real load. Physical design margins vanish.

This is the point where traditional siloed development breaks down. PCB, mechanical, firmware, and AI teams optimize locally, but the system behavior is defined by how these choices interact. As data rates rise and AI workloads move closer to the edge, there is little room left for late correction.

Hardware unification addresses this gap by treating the system as a single engineering problem from the start, rather than a set of components to be stitched together at the end.

Fragmented hardware turns routine engineering into a schedule risk

In a fragmented hardware design approach, routine engineering tasks accumulate risk rather than resolving it. Lack of standardized tools across vendors makes issue isolation slow and uncertain. A signal integrity issue observed during bring-up may require navigating multiple proprietary toolchains, each with limited visibility into the full system context. What should be a straightforward diagnosis stretches into weeks of cross-team coordination.

Multi-vendor silicon further amplifies the problem. Each processor, memory device, and high-speed interface comes with its own reference assumptions and validation scope. When these components are combined on a single board, prior validation cannot be reused with confidence. Engineers are forced to repeatedly revalidate signal integrity, power delivery, and timing margins, even when the underlying architectures are similar.

Debugging and performance visibility also remain fragmented. Power, thermal, and firmware behavior are often observed in isolation. This creates blind spots where failures emerge only under specific workload combinations or environmental conditions.

Vendor lock-in compounds the issue, as IPs and closed ecosystems restrict component substitution, limit second-sourcing, and lock long-term cost into the design.

Fragmentation rarely causes dramatic failures early. Instead, it drains time, confidence, and engineering focus throughout the lifecycle.

How do we eliminate fragmentation and adhere to the unified hardware design approach?

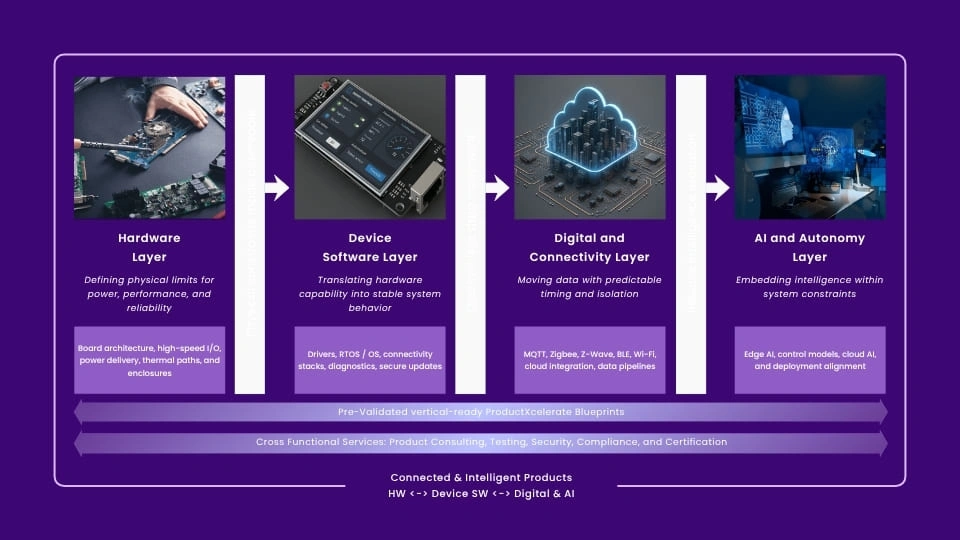

Hardware unification can be understood as a four-layer engineering structure where hardware, device software, and digital systems form a stable backbone, and intelligence is integrated within the same stack rather than added later. Together, these layers operate as a single product engineering system, rather than a loosely connected set of disciplines.

The intent is not to bundle more features into a design, but to ensure that decisions made at one layer do not undermine behavior at another. Each layer is developed with shared performance, reliability, and lifecycle targets, so system behavior is predictable before the product reaches integration or deployment.

Unified System Architecture for Edge AI Products

- Hardware layer: The hardware layer defines the system’s physical limits. Board architecture, high-speed interfaces, power delivery, thermal paths, and enclosures are designed together so performance, EMI/EMC, and environmental requirements are met without late trade-offs. This prevents reliability and schedule risk from being pushed into the bring-up.

- Device software layer: Device software translates hardware capability into consistent system behavior. Drivers, OS layers, connectivity, diagnostics, and update paths are treated as reusable building blocks. Observability, security, and lifecycle updates are planned early, allowing firmware to evolve without destabilizing the platform.

- Digital and connectivity layer: The digital layer governs how data moves. Mostly high-bandwidth fabrics, low-latency packet engines, and communication paths are engineered around real workloads, not theoretical throughput. Common industrial and IoT protocols such as MQTT, Zigbee, Z-Wave, BLE, and Wi-Fi are integrated with explicit timing, isolation, and reliability guarantees, rather than treated as best-effort links. The focus is on predictable timing and isolation under load, not just connectivity.

- AI and autonomy layer: Intelligence is designed into the system from the start, not layered on later. AI models and control logic are developed with a clear awareness of power, latency, and hardware limits. System models align behavior across development and deployment, so the product is AI-ready from the start.

When these four layers are aligned around shared performance and reliability targets, integration shifts from best-effort coordination to a disciplined engineering methodology. The system converges earlier, behaves more predictably, and reaches first-pass success without relying on late fixes or excessive revalidation.

Real-world use cases of hardware unification

- Unified gateway for SDVs

SDVs depend on a central gateway that must run safety-critical control alongside diagnostics, updates, and data analytics under strict timing and reliability constraints. When these functions are integrated incrementally, systems that appear stable in isolation become unpredictable once real workloads run together.

Hardware unification changes this by architecting compute, safety controllers, isolation domains, and vehicle networks as one system from the outset. Deterministic control paths are separated in hardware from best-effort processing, and power, thermal, memory, and networking behavior are validated against continuous vehicle operation. This allows the gateway to evolve through software updates without destabilizing the vehicle, turning it into a stable backbone that supports current ECUs while scaling toward software-defined and fleet-level intelligence without repeated hardware redesign.

- Industrial and building management gateway

Industrial and building management systems rely on gateways that must operate continuously while handling control logic, multiple field protocols, analytics, and remote management in harsh environments. When ruggedization, connectivity, and intelligence are integrated separately, systems often degrade over time as analytics and monitoring workloads interfere with core operations.

Hardware unification addresses this by designing compute, deterministic control paths, industrial networking, and edge intelligence as a single system. Power delivery, thermal behavior, and memory stability are validated for sustained operation, while control and analytics workloads are isolated to preserve predictable behavior. The result is a gateway that maintains uptime, supports predictive maintenance and remote updates, and continues to operate reliably as system complexity grows.

Future of Edge AI systems with hardware unification

As edge and high-speed systems continue to grow in density and autonomy, margins for late correction will shrink further. Systems that rely on post-hoc integration and late-stage debugging will struggle to scale. Systems built on unified hardware foundations will evolve predictably across product generations.

To conclude, unification enables hardware to transition from isolated projects into platforms. It supports reuse, accelerates development cycles, and stabilizes compliance and deployment. Most importantly, it allows organizations to focus engineering effort on differentiation rather than requalification.

MosChip’s expertise across silicon engineering, high-speed PCB design, system architecture, embedded software, and ruggedized product deployment reflects the realities of building modern edge and high-speed systems. This breadth allows hardware, firmware, mechanical, and AI constraints to be addressed together, rather than reconciled late in the cycle.

Through integrated product engineering practices and its solution accelerator – ProductXcelerate Blueprints, MosChip applies hardware unification as an execution discipline. The blueprints provide pre-validated system foundations that align compute, interconnect, power, thermal behavior, firmware, and lifecycle management. This enables teams to move from concept to deployment with predictable system behavior, reduced revalidation effort, and lower integration risk.

Author

-

Ambuj is a Marketing professional at MosChip creating impactful techno-commercial writeups and conducting extensive market research to promote businesses on various platforms. He has been a passionate marketer for more than three years and is constantly looking for new endeavours to take on. When He’s not working, Ambuj can be found riding his bike or exploring new destinations.