What is Auracast and how it helps Bluetooth LE Audio enabled broadcast?

Wireless audio did not change overnight. Progress focused on better codecs, lower power use, and smaller devices. Performance improved, batteries lasted longer, and form factors shrank. The connection model, however, stayed largely the same: one source, one listener, one maintained link.

That structure worked when audio was mostly personal. It becomes inefficient when the same stream must reach many listeners at once. Public information systems, assistive listening environments, shared media spaces, and group communication settings reveal this limitation.

The next stage of Bluetooth audio evolution shifts focus from connection to distribution.

Modern wireless audio systems must support shared listening, not just private connections, and Bluetooth audio architectures were originally optimized for point-to-point use. A source device pairs with a single receiver, negotiates codec and transport parameters, and maintains that link during playback. This structure works efficiently for one-to-one listening in Classic Bluetooth, but it consumes more power and scales poorly as the number of listening devices increases.

Limitations become apparent when identical audio must reach many listeners at the same time. Classic Bluetooth does not include a native broadcast mechanism. Companies implementing multi-listener audio often duplicate streams at higher software layers or rely on vendor-specific extensions. As the number of receivers grows, bandwidth demand increases, connection-management overhead multiplies, and maintaining synchronization across devices becomes more difficult.

These constraints are most visible in shared physical environments. Most systems rely on infrared transmitters, proprietary radios, or fixed venue hardware, which increases installation complexity and limits user device choice.

Auracast, introduced within Bluetooth LE Audio, addresses this distribution gap at the protocol level. A transmitter encodes the audio once and broadcasts a synchronized stream that compatible receivers can access simultaneously without establishing individual paired links. This replaces connection-heavy architectures with a scalable audio distribution model built into the Bluetooth stack. For companies building such systems, embedded software development becomes essential to enabling scalable audio distribution across device platforms.

Understanding how this broadcast model works requires a look at the protocol mechanisms introduced with Bluetooth LE Audio.

How does Auracast work at the protocol level?

Auracast is built on the Bluetooth LE Audio architecture, which introduces new capabilities at the codec, link, and control layers.

At the codec layer, LE Audio replaces the legacy SBC codec with the Low Complexity Communication Codec (LC3). LC3 is designed for efficiency. It delivers comparable or better perceived audio quality at significantly lower bitrates. Compared with Bluetooth Classic audio implementations, Bluetooth LE Audio consumes very low power and reduces radio airtime. This efficiency is particularly important for battery-operated devices such as earbuds, hearing aids, and portable receivers that must remain active while listening to broadcast streams. Lower bitrate reduces airtime per packet, which improves spectral efficiency (SE) and power efficiency. This matters for battery-operated devices and dense radio environments where many receivers operate simultaneously.

At the link layer, LE Audio introduces isochronous channels for time-bounded packet delivery. Classic Bluetooth scheduling transmits packets when the radio becomes available. Isochronous transmission follows a different discipline. Packets are scheduled at fixed, predictable intervals. This structure supports predictable end-to-end delay and tight synchronization across receivers.

Isochronous channels schedule transmissions at fixed intervals, enabling synchronized multi-channel audio delivery required for stereo playback and broadcast reception.

Two channel types exist within this framework.

- Connected Isochronous Streams (CIS) support conventional device-to-device audio links, such as a smartphone connected to wireless earbuds.

- Broadcast Isochronous Streams (BIS) support one-to-many transmission. BIS forms the transport foundation for Auracast.

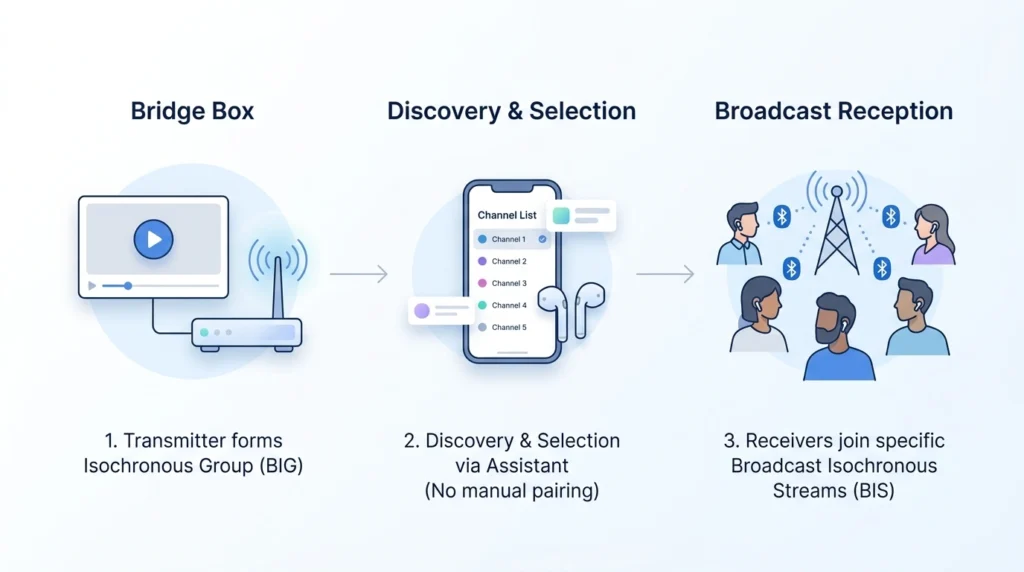

In broadcast operation, a transmitter creates a Broadcast Isochronous Group (BIG). A BIG can contain multiple BIS streams. Each stream may carry a different audio component. One stream can carry the left stereo channel, another the right. Additional streams may provide alternate language tracks, descriptive audio, or commentary feeds.

Receivers synchronize their clocks with the broadcast timing structure and reconstruct audio using scheduled packets. Predictable timing keeps stereo channels aligned and prevents drift between listeners.

Discovery and stream access follow a defined control flow. Periodic Bluetooth LE advertising packets announce available streams and include metadata describing the broadcast. An assistant device, typically a smartphone or similar controller, scans these advertisements and presents available streams to the user. Once a selection is made, the assistant coordinates stream access. Receivers then join the chosen broadcast.

This separation keeps listening devices simple. Earbuds and hearing aids focus on reception and decoding, while the assistant device manages discovery, selection, and user interaction.

Auracast Protocol and System Flow

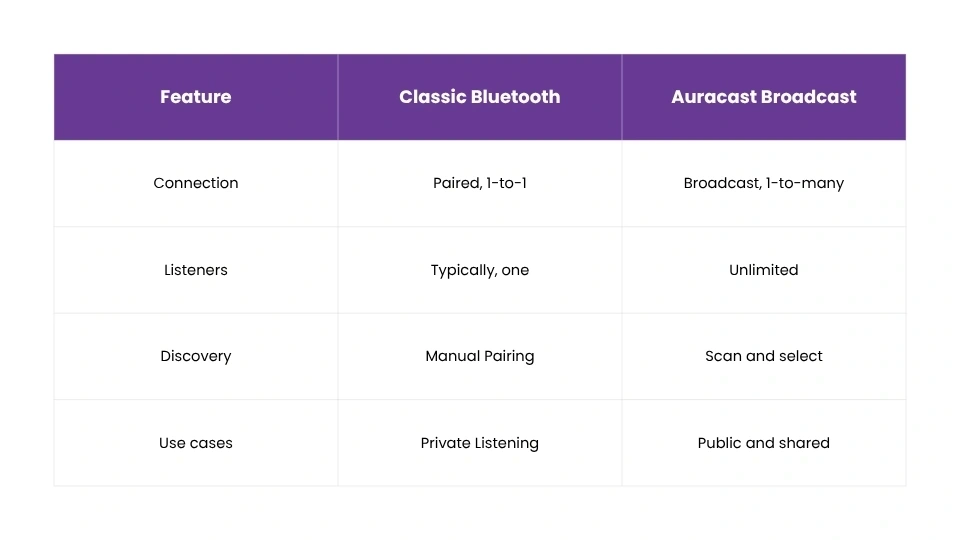

What makes Auracast different from Classic Bluetooth audio?

Classic Bluetooth audio scales poorly because each listener requires a separate connection. A source must maintain multiple links, manage independent data flows, and allocate bandwidth per device.

Auracast uses a single broadcast stream that many receivers can join. The transmitter sends one stream regardless of listener count. Receivers synchronize to the same transmission without creating individual links.

Operational differences follow from this structure. There is no repeated pairing process for each listener. There is no per-device connection overhead. Stream discovery occurs through advertising rather than manual pairing. An assistant interface handles user selection, reducing friction in shared environments. This design makes large-scale wireless listening feasible within standard Bluetooth infrastructure.

Summarizing the operational differences:

Classic Bluetooth and Auracast broadcast

What devices and hardware are required for Auracast?

Auracast depends on hardware support introduced with Bluetooth 5.2 and later specifications. Devices must support LE Audio and isochronous channel capabilities. Earlier Bluetooth controllers and related hardware platforms lacked the required radio scheduling and protocol features, and these cannot be added through software updates alone.

On the transmission side, a device performs three core tasks. It captures audio from an input source, encodes that audio using LC3, and transmits encoded frames within scheduled broadcast intervals.

Transmitters appear in two common forms.

- Integrated systems: It embeds broadcast capability directly into consumer or embedded devices. Televisions, smartphones, tablets, media players, and infotainment systems can incorporate Auracast transmission as part of their native audio pipeline.

- Bridge systems: The Bridge systems function as external broadcast units. A bridge accepts audio from analog or digital inputs such as line-in connectors, HDMI audio extractors, USB audio interfaces, or digital audio buses. It encodes incoming audio and transmits it through LE Audio broadcast streams. Bridge systems are common in public venues where legacy equipment must interface with modern wireless distribution.

Receivers must support LE Audio decoding and Auracast reception. Many recent earbuds, hearing aids, communication devices, and smartphones include compatible chipsets capable of BIS synchronization and LC3 decoding.

At the software layer, operating systems must integrate LE Audio features with existing audio frameworks. This includes stream discovery, user selection interfaces, decoding pipelines, and routing of audio to playback hardware. Successful deployment depends on coordinated hardware and embedded software development across firmware, protocol stacks, and platform integration layers.

Where is Auracast used in real deployments?

Public display environments benefit immediately from broadcast audio. Televisions in gyms, sports arenas, airport lounges, and waiting areas often run silently. With Auracast, each display can transmit its audio feed directly. Listeners use personal earbuds or hearing devices that support LE Audio without relying on venue-owned hardware. Assistive listening systems gain flexibility. Traditional induction loops and infrared receivers require specialized equipment. Auracast enables direct digital reception on compatible hearing aids and LE Audio earbuds, reducing infrastructure complexity and improving audio clarity.

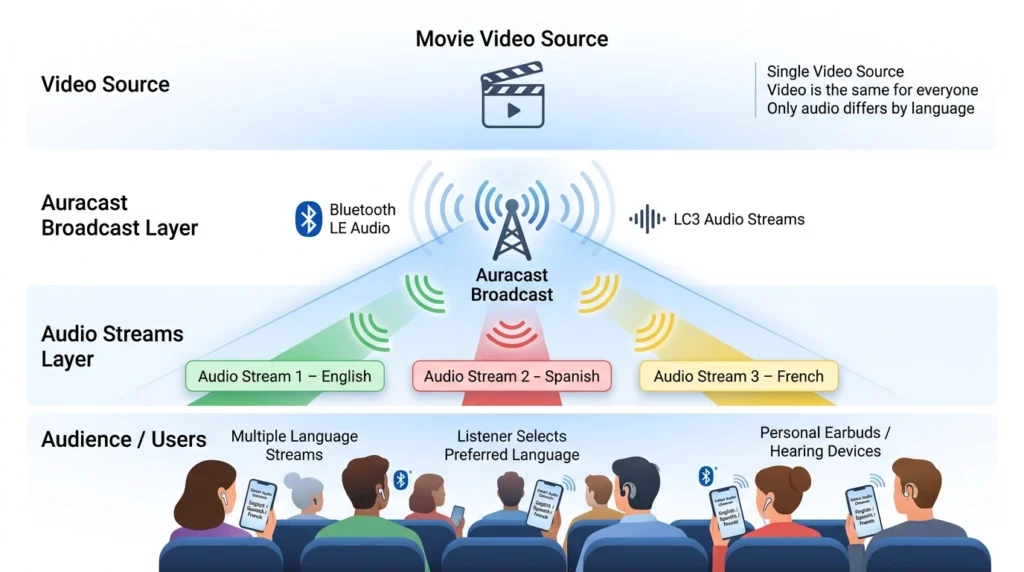

Conference and event venues can support simultaneous interpretation through multiple audio streams within a single broadcast group. Attendees select their preferred language channel without manual equipment distribution.

Media and entertainment environments can apply the same principle. A single video source can be paired with multiple synchronized audio streams carrying different languages. Viewers select their preferred language feed on their personal listening device without requiring separate receivers or dedicated distribution hardware.

Multi-language audio distribution using Auracast broadcast

Educational institutions can transmit instructor audio directly to student devices in lecture halls and auditoriums. This improves intelligibility in large rooms and supports students who rely on personal assistive listening tools.

Personal devices also support shared listening. A smartphone can broadcast music, podcasts, or media audio to nearby receivers. Friends and family can listen simultaneously without managing multiple Bluetooth connections.

Industrial and field operations can also benefit from broadcast audio distribution. In mining sites, large construction zones, and manufacturing facilities, teams work across wide areas under high noise conditions. Auracast can distribute live audio feeds from control rooms or communication systems directly to headsets. Safety announcements and operational updates can reach multiple personnel simultaneously without requiring individual radio connections.

What does Auracast mean for the future of Bluetooth audio systems?

Auracast extends Bluetooth audio beyond private listening while preserving compatibility with traditional device-to-device links. Communication devices, headphones/earbuds, and hearing aids can support both connected-audio and broadcast-reception modes.

Adoption depends on readiness across the technology stack. The platforms must implement isochronous channel support. Firmware must manage broadcast scheduling, stream configuration, and timing control. Operating systems must provide application interfaces for discovery and stream management. As device ecosystems mature, broadcast audio becomes viable for public media distribution, assistive listening, and group listening scenarios that previously required specialized systems.

MosChip supports development of devices that integrate Multi Audio Streaming via Audio Bus (I2S TDM), LE audio and Auracast-enabled devices across the full engineering stack. Its Device Software Engineering capability under the Product Engineering umbrella spans firmware design and driver development for Bluetooth controllers, radio interfaces, and device platforms, along with integration of LE Audio protocol layers. Hardware design and system-level validation is carried out across hardware, software, interoperability, and performance environments to ensure reliable deployment. MosChip further shortens product integration cycles through ProductXcelerate Blueprints, supported by ready-to-use Agentic AI cores and GenAIoT platforms that assist device software development and platform integration.

For BT Classic, BLE Audio and Auracast development or integration support, connect with us.

-

View other Blogs

View other BlogsSandesh D holds a master’s and serves as the Associate Vice President - Software & Systems Design at MosChip Technologies, boasts over two decades of experience in embedded Software & Systems design. His expertise lies in industrial experience in Embedded Platform, Pre & Post Silicon Validation, Real-Time and System Software Development in Embedded (Wireless, Consumer-Electronics, automotive domains spanning various Technical & Techno-Managerial roles focusing on System software level Management. Sandesh has played a pivotal role in enhancing the efficiency and reliability of embedded systems, effectively bridging the gap between hardware and software. He also excels in Technical Architect and execution through multiple dependencies. Gets things done & thrives in bringing the required clarity in a complex fuzzy situation, interacting with multiple stakeholders & right technical analysis. Supported silicon wakeup & customer ramp from R&D co-working with PMO, Architecture, & customer facing teams working across geographies.