A PoV on AI Native Crash Intelligence for Safety Mobility

Modern vehicles are quickly becoming software-defined systems, driven by the growing need for safety through technologies like ADAS and event data recorders. While these systems have significantly improved accident prevention and response, crashes can still occur. When they do, current solutions often fail to clearly explain the reasons behind them, as they depend heavily on isolated sensor logs. To truly understand crashes, we need to go beyond fragmented data and consider the complete driving context, including human behavior, environmental factors, vehicle condition, and system interactions. This blog explores AI-native crash intelligence, showing how a digital black box can transform telemetry into actionable, legally traceable insights that enhance safety, accountability, and mobility outcomes.

In this article, Vinod Kumar Galla, Director of PES, shares his thoughts on creating AI-powered crash intelligence systems. Drawing from his extensive experience in automotive platforms, functional safety, and edge AI, he discusses how we need to move beyond just integrating multiple sensors and move to understanding and analyzing driver fitness and emotions, ensuring secure data transmission, and implementing safety-compliant computing architectures, which can revolutionize incident analysis. His insights provide a practical roadmap for achieving safer roads, quicker claims processing, and fostering data-driven trust in intelligent mobility systems.

Q1. Why are today’s ADAS and EDRs insufficient for explaining incidents, and why do we need an AI-native digital black box to capture human and road context?

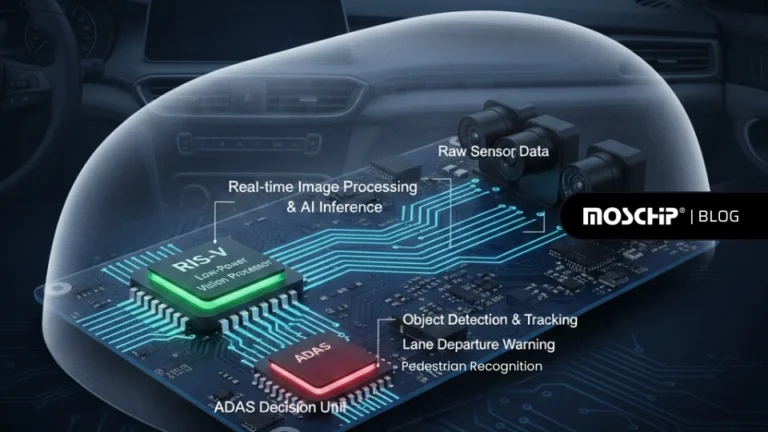

A1. ADAS systems (cameras, radar, and sensors) are designed to enhance driving safety under normal conditions. However, in adverse environments such as poor weather, these systems can suffer from degraded perception, leading to inaccurate data acquisition and potential system failures.

Similarly, Event Data Recorders (EDRs) capture only a limited set of parameters, such as vehicle speed, brake status, accelerator position, airbag deployment, and seatbelt usage, within a constrained pre- and post-crash window. This restricted dataset often makes it difficult to reconstruct the true cause of an incident with high confidence.

Both ADAS and EDR systems face inherent limitations, including sensor inaccuracies, signal loss, environmental interference, hardware constraints, and algorithmic boundaries. As a result, they lack the contextual depth required for comprehensive event analysis.

Accurate incident understanding requires a broader spectrum of data, including driver behavior and distraction, road and weather conditions, and detailed vehicle diagnostics. Traditional systems do not capture critical factors such as the driver’s emotional state, physiological indicators, or continuous in-cabin and external situational context.

To support this, we have an AI-native digital black box solution with 3-dimensional analyses of crash data (Driver, inside view,360′ outside view), and it generates an AI-based police report for Crash Event, which aids insurance companies and will act as evidence in court.

Q2. How can the AI native digital black box ensure pre-trip driver fitness and detect/mitigate emotional shifts like aggression or sadness during driving?

A2. Before the vehicle starts, the system performs a comprehensive driver fitness assessment using key physiological indicators such as blood sugar levels, blood pressure, eyesight condition, alcohol presence, and stress levels. If any parameter deviates from safe thresholds, the system restricts vehicle ignition to prevent potential risk.

During the journey, the system continuously monitors the driver’s emotional and behavioral states. Sudden or prolonged emotional shifts, such as aggression, anxiety, or distress, can impair judgment and reaction time. When such patterns are detected, the system initiates corrective interventions, including playing calming audio to help stabilize the driver.

If the condition persists or escalates, the system activates a limp-home mode to limit vehicle performance and reduce risk. Simultaneously, alerts are sent to the vehicle owner or designated contacts to ensure timely awareness and intervention.

Q3. How are 360° sensors, infotainment, V2X, CAN FD, GPS/IMU, and ADAS events fused into a synchronized ±5minute evidence window?

A3. While the vehicle is in drive mode, data from multiple sources, including 360° surround sensors, in-cabin monitoring, driver fitness and emotional state, ADAS events, CAN FD signals, infotainment inputs, and GPS/IMU telemetry, is continuously acquired and time-synchronized.

Upon detection of a critical event (such as a crash or anomaly), the system automatically extracts a ±5-minute evidence window, capturing both pre- and post-event context. This dataset includes 360° external views, in-cabin footage, driver behavior and emotional state, vital health parameters, and precise vehicle dynamics and location data.

The aggregated data is securely packaged and stored locally for integrity, while simultaneously transmitted to the cloud for advanced AI-driven analysis. This enables accurate event reconstruction, root cause identification, and generation of actionable insights.

Achieving this level of real-time, synchronized multi-modal fusion, while running multiple perception and behavioral models, requires a high-performance, safety-compliant compute foundation capable of deterministic processing and domain isolation. This is where S32G-based domain architectures play a critical role.

Q4. How does structured telemetry produce a legally traceable AI-generated police report, and what outcomes follow: faster claims, safer driving, and better fleet insights?

A4. Structured and legally traceable telemetry data is collected following the 3A principles: Accurate, Authentic, and Attributable, and is supported through JSON-based open telemetry logs. The collected telemetry logs are securely stored in a tamper-proof device using WORM (Write Once Read Many) storage along with cryptographic hashing based on the SHA-256 algorithm to ensure data integrity and non-repudiation.

The AI-native digital black box solution performs three-dimensional crash data analysis, incorporating driver data, interior cabin view, and a 360° exterior vehicle view. Based on this analysis, the system generates an AI-assisted First Information Report (FIR) for crash events. This report can assist insurance companies in claim assessment and may serve as supporting digital evidence in legal proceedings.

The solution is designed to comply with standard automotive telemetry and legal compliance requirements. The generated FIR report is made available to both the driver and fleet owner. This system supports safer driving practices, faster insurance claim processing, and improved fleet management.

Q5. How do S32G domains meet ASIL-D requirements to run robust ADAS, pothole, drowsiness, and affect models across real-world conditions?

A5. The S32G platform acts as a central gateway and domain controller, connecting heterogeneous subsystems including cameras, radar, LiDAR, ECUs, V2X, and vehicle networks, while ensuring secure and reliable data flow across domains. Its heterogeneous multicore architecture enables the concurrent execution of AI-driven perception and safety-critical control functions.

To satisfy ASIL-D requirements, the platform incorporates hardware-enforced safety mechanisms such as lockstep processing, watchdogs, error correction (ECC), and fault detection with fail-operational/fail-safe responses. This ensures deterministic behavior even under faults, signal degradation, or environmental variability.

The S32G also supports high-throughput, low-latency data acquisition and processing, enabling real-time ingestion and fusion of ADAS sensor data, driver monitoring inputs, and vehicle telemetry. This capability is essential for running robust workloads such as L2/L3 perception, pothole detection, driver drowsiness, and affect analysis under diverse real-world conditions.

Additionally, its secure gateway capabilities allow validated data to be packaged and transmitted to the cloud for advanced AI analytics, extending edge intelligence with deeper insights such as road condition mapping and behavioral analysis.

MosChip has expertise in automotive engineering, delivering end-to-end solutions across ADAS, digital cockpit, vehicle connectivity, functional safety (ISO 26262), AUTOSAR, and software-defined vehicle (SDV) platforms. Its capabilities span silicon-to-system design, embedded systems, edge AI integration, high-performance compute, and secure data pipelines. With strong domain knowledge, MosChip enables scalable, intelligent, and safety-compliant mobility solutions, accelerating innovation for next-generation connected and autonomous vehicle ecosystems.

To know more about MosChip’s QA capabilities, drop us a line, and our team will get back to you.

-

View other Blogs

View other BlogsVinod Kumar Galla is the Director – PES at MosChip, where he leads automotive and embedded systems engineering with a strong focus on next‑generation mobility platforms. With over 25 years of experience in embedded engineering and more than 18 years in automotive software and systems, he brings deep expertise in system architecture, AUTOSAR, ADAS, functional safety (FuSa), ASPICE, and verification and validation. Vinod has held senior technical leadership roles across global organizations, driving complex product development and engineering solutions. Passionate about building safety‑critical, scalable automotive systems, he actively mentors engineering teams and contributes to advancing intelligent, software‑defined vehicles.