Driving Rapid QA Cycles through AI and Generative AI Integration

Quality Assurance (QA) is entering a new phase of evolution, drawing from the strengths of manual testing and conventional test automation. Traditionally, software and embedded systems relied on fixed test cases, human checks, and strict testing schedules, which led to the QA process lagging fast-paced technological advancements.

While traditional test automation introduced greater efficiency by automating repetitive tasks, it frequently struggled to keep up with dynamic changes or handle complex situations, requiring a lot of manual maintenance and human effort.

Generative AI (GenAI) is transforming Quality Assurance by providing advanced test intelligence that relies on reasoning and enabling smart, adaptive automation. Unlike the traditional script-based approach, GenAI tools can dynamically create test cases, anticipate defects, and validate edge scenarios that human testers often overlook. This evolution makes QA more flexible and intelligent, aligning it better with the needs of modern development.

GenAI has become a crucial part of the Software Testing Life Cycle (STLC), which includes Planning, Design, Execution, and Reporting. The way we create tests is changing from traditional manual methods to scenarios generated by AI. Instead of static cycles, we now have adaptive test suites that learn from past results and improve on their own. This transition to AI-driven, self-learning systems enhances product reliability through smarter and more resilient testing.

This blog explores how GenAI is changing the landscape of QA throughout the STLC, providing examples of its real-world effects.

The Need for Gen AI-Driven QA Innovation

Quality Assurance is at a breaking point. Modern software and embedded systems are evolving at a pace that traditional QA processes simply can’t keep up with. Release cycles have shortened from months to just days, architectures have transitioned to microservices and distributed edge systems, and user experiences now encompass a variety of devices, sensors, and cloud platforms.

In this environment, even well-established automation tools face challenges because they are inherently static, they rely on scripts, depend on predefined inputs, and can easily fail when the system being tested undergoes changes.

The growing gap highlights the necessity for GenAI-driven quality assurance, not just as a luxury but as an essential requirement. Teams require systems that can understand requirements without waiting for them to be documented, adjust to changes in user interfaces or APIs without needing constant human intervention, and futuristic potential failures well before they occur in production. While traditional automation speeds up execution, GenAI enhances comprehension.

Generative AI adds a crucial layer of intelligence that has been missing in quality assurance. It can evaluate code, analyze past defects, leverage domain knowledge, and recognize execution patterns to identify risks that traditional scripted automation might miss. This technology enables the creation of context-aware tests, the generation of realistic synthetic data that complies with standards, and the dynamic adaptation of test suites as systems change.

Chain-of-Thought prompting methods will advance logical reasoning by encouraging reasoning when generating test cases. Meanwhile, RAG (retrieval-augmented generation) products can reduce time to failure analysis by clustering logs and associating them with recent commits. Also, AI risk scoring of new code commits and regression test prioritization can provide speed to development cycles without sacrificing test coverage.

This creates a partnership between AI and humans: the AI manages the repetitive, data-heavy work of creating tests, debugging, and predicting risk, while humans provide the strategic analysis and quality governance. The result is a self-adaptive and self-learning Quality Assurance ecosystem that produces improved delivery speed, reliability, and competitive advantage in the creation of software and embedded systems.

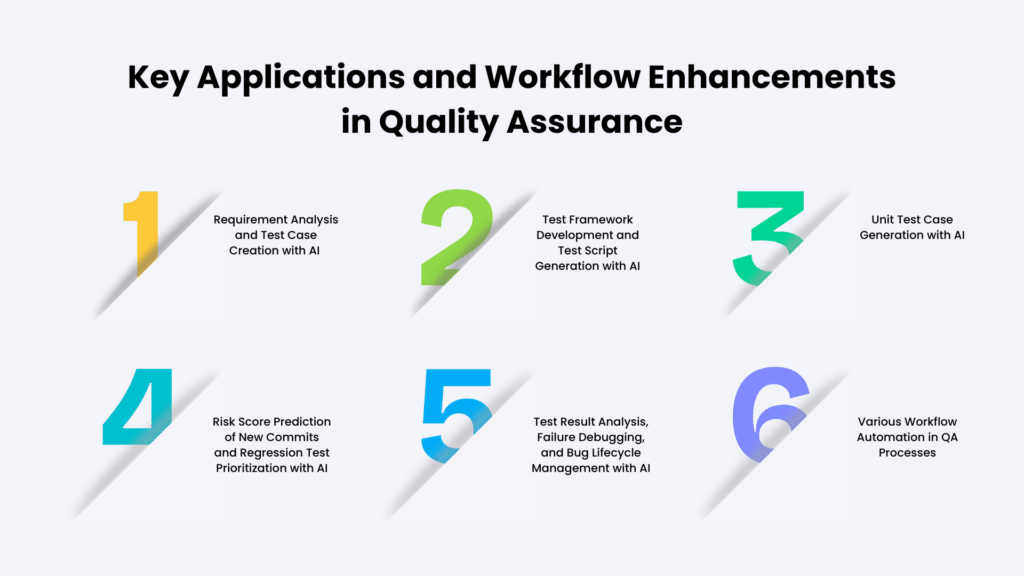

Key Applications and Workflow Enhancements in Quality Assurance

Given increased interest in AI-enabled innovation, many key applications and workflow enhancements are already changing the Quality Assurance lifecycle. Leveraging AI and Generative AI, these applications power testing approaches that are smarter, faster, and more flexible.

The combination of automating complex work entailed in the QA process while developing predictive insights and actions allows QA teams to focus on the more important work while also accelerating delivery timelines.

Some of the key applications and processes are outlined in the sections that follow.

1. Requirement Analysis and Test Case Creation with AI

Using AI for requirement analysis and test case development can greatly accelerate the early steps of QA planning. Pulling test cases from in-depth functional specification documents (FSDs) can be a lengthy task and is subject to error. AI models through API (like the OpenAI API) can help improve automation of these parts of the process.

Uniformity of format in the requirements document is very important for efficiency. The arrangement of the input helps the AI system properly identify the essential Sub-requirements, inputs, and the expected outputs. Using more advanced thinking methods, like Chain-of-Thought (CoT) prompting, helps the AI incentivize dealing with more complex logic, which leads to producing accurate testing steps for tracing.

The AI-driven solution reliably organizes the generated test cases into a structured document, such as an Excel file, which includes columns for the Requirement Traceability Matrix (RTM) identifier, test steps, expected results, test data, and test case type (like positive or negative). This organized structure can then be easily extracted for direct import into a test management tool, such as TestRail or TestLink. A manual review of the generated test cases is still crucial before moving into production to ensure quality and trustworthiness, which takes, overall, 50% less time to create the test cases.

2. Test Framework Development and Test Script Generation with AI

In automation testing, building robust frameworks can sometimes hinder progress due to the need for manual setup of essential modules, extensive boilerplate coding, and careful library integration. These tasks can be quite time-consuming and require a lot of attention to detail regarding compatibility and maintainability.

On the other hand, generative AI tools can significantly accelerate the process by automating code stages, proposing optimized architectures, and quickly generating reusable components. This allows teams to concentrate on developing and testing advanced features rather than getting bogged down in repetitive foundational work.

- Framework Development Support: When beginning the setup, tools like GitHub Copilot can rapidly produce boilerplate code, library functions, and portions of the test framework, which will help speed things along in the early stages of the setup.

- Automated Test Script Creation: Once the essential libraries and test functions are set up, Copilot can assist in crafting test scripts that leverage the features of the framework. With the more advanced capabilities, OpenAI APIs can automatically create an executable test script with just a few instructions. This entails exposing the AI to the documentation and the function signatures of the test framework. The AI understands how the test framework is structured and writes proper executable scripts that are specific to the application that is being tested.

3. Unit Test Case Generation with AI

Unit testing is very important, but it takes a lot of time for developers. With OpenAI-assisted AI, you can apply artificial intelligence to automatically act on events with GitHub Pull Requests (PRs). It analyzes the newly committed source code, develops suitable unit test cases, and forwards the unit test cases for peer review. Once reviewed, the system can run these tests, report pass/fail outcomes, and generate comprehensive code coverage reports, which can greatly accelerate the development cycle and maintain the quality of the foundational code.

Key Application and Workflow Enhancement in QA

4. Risk Score Prediction of New Commits and Regression Test Prioritization with AI

As development cycles shorten, the QA team is pressured to test faster and more. Evaluating the effects of previous commits and manually prioritizing regression tests can be quite tedious, often difficult to scale, and can lead to mistakes. A machine-learning (ML)-enabled solution relieves this burden by predicting new commits’ risk.

The machine learning algorithm draws upon all project history in the repository, including the prior commits, the patterns of bugs being addressed, code churn, the complexity of files, and historical Continuous Integration failures to compute a risk value for each commit or pull contribution.

The ML model computes a risk value for each contribution, and higher risk values correlate to a higher probability of introducing defects. This allows the QA process to shift from a reactive approach to examining commits to a predictive approach, whereby the cues of risky commits can be logged for consideration before incorporation into the code base.

The system also includes the prioritization of regression tests to further speed up the QA cycles. The system keeps track of test cases, associated files, and previous failures to identify which tests are most relevant for each new change. Instead of running the regression suite in a predefined order, most impacted tests can be run first as they have high potential to uncover bugs. This method allows for faster bug detection and helps to keep overall costs under control.

5. Test Result Analysis, Failure Debugging, and Bug Lifecycle Management with AI

AI solutions can significantly enhance the way we analyze test results and manage the bug lifecycle. Let us discuss here,

For instance, automated summarization and clustering allow an AI system to review the outcomes of daily regression tests, pinpointing new failures and test result improvements. For large-scale regression, a Retrieval-Augmented Generation (RAG) model can process the Regression Test Plan and failure logs.

This allows the AI to intelligently group failures (for instance, failures that occur mainly in database connectivity tests rather than UI tests) and identify unique failures by examining the log content.

Root Cause Suggestion: With the ability to examine the differences in commits between two daily runs, the AI can correlate log analysis with the latest code changes to pinpoint potential commits that might have led to the failures. This streamlined approach to debugging helps to speed up the entire QA process.

6. Various Workflow Automation in QA Processes

QA professionals can quickly build workflow automation utilizing coding assistants such as GitHub Copilot connected to Integrated Development Environments (IDEs) (e.g., Visual Studio Code, PyCharm):

- Monitoring JIRA Stages: Solutions could be developed to retrieve the JIRA status, assignees, and estimates to track real-time data for releases.

- Performance Tracking and Notification: Automated monitors could alert teams if regression test cases take longer than average execution time.

- Unified Dashboards: It can be beneficial to save all the email notifications into one dashboard that collects all key information: nightly pass/fail rates, test resource use, disk space use, new JIRA issues, issues with test setups, etc. This consolidates your primary level of information and provides you with links to detailed reports to continue building your awareness of testing checkpoints.

- Report Generation: Automating the conversion of raw test data into structured reports, such as Excel or PDF files, is accomplished using AI-assisted report generation that standardizes document format for communication between stakeholders.

QA teams use utility scripts that directly connect to the Test Management System to remove the need for manual collection and compilation of raw test data and confirm the reliability of the report data. In addition, the ability to report on test performance trends helps promote operational efficiency by streamlining the QA process and increasing team productivity.

- Resource Monitoring: This uses a series of automated dashboards and alerts to monitor the important metrics of the testing infrastructure on an ongoing basis, such as CPU usage, memory use, and disk space. Automated resource monitoring systems will identify resource usage anomalies before they cause failures, allowing for quick and efficient resolutions for load balancing and rapid memory spikes; this ensures continued productive testing and allows for optimal use of resources.

- Tools Cross-Platform Integration: The cross-platform integration between software tools creates a seamless automated environment that enables collaboration between different software development platforms (e.g., Jira, GitHub, and Jenkins). In effect, each software tool performs actions on behalf of other tools automatically.

For example, when a pull request is merged into GitHub or when tests pass through continuous integration (CI), an automatic comment is made in Jira. This gives stakeholders immediate insight into the status of their project.

Additionally, automated feedback and regression triage processes allow developers to quickly assess what caused a failure to improve their ability to trace back to the source of failures and to resolve defects more rapidly during the entire software development lifecycle.

MosChip stands out by integrating AI-driven quality assurance with test automation frameworks and automation bots. To know more about our QA practices, contact us, and our team will get in touch with you.